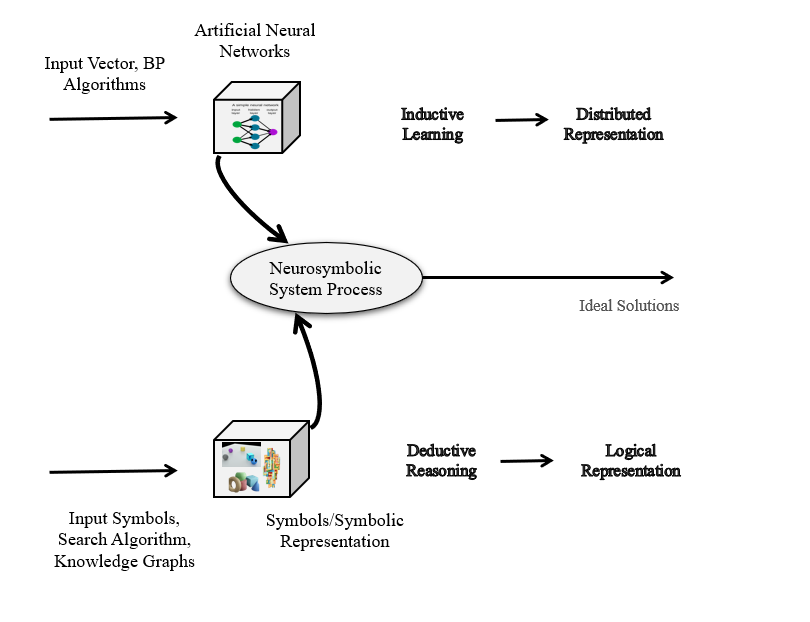

Neurosymbolic AI is not one algorithm. It is a family of approaches that try to combine two different strengths: the pattern-learning ability of neural networks and the structured reasoning ability of symbolic AI.

Neural systems are good at learning from messy data: images, text, speech, sensor streams, and large collections of examples. Symbolic systems are good at representing explicit knowledge: rules, logic, constraints, graphs, plans, and relationships.

The goal of neurosymbolic AI is to make these two sides work together.

This article is based on the paper “Neuro-symbolic approaches in artificial intelligence” by Hitzler, Eberhart, Ebrahimi, Sarker, and Zhou. The paper is useful because it does not treat neurosymbolic AI as a single architecture. Instead, it describes several ways neural and symbolic methods can be connected.

Why Combine Neural and Symbolic AI?

Deep learning has produced impressive results, but several limitations remain difficult to ignore.

Neural networks often need large amounts of data. They can struggle with systematic reasoning, logical consistency, out-of-distribution generalization, and explicit explanation. They may learn useful correlations without understanding the rules or structures behind them.

Symbolic AI has the opposite profile. It is more transparent and structured. It can represent domain knowledge, constraints, and reasoning procedures. But it can also be brittle when the world is noisy, uncertain, or difficult to fully formalize.

Neurosymbolic AI aims to combine:

- neural learning from data

- symbolic representation of knowledge

- reasoning over explicit structures

- better generalization from fewer examples

- improved explainability and trustworthiness

The interesting question is not whether neural or symbolic AI is better. The better question is: what role should each play in a working intelligent system?

1. Solving Symbolic Problems with Deep Learning

One approach is to train neural networks to solve problems that are traditionally symbolic.

Examples include:

- logical deduction

- rule learning

- planning

- term rewriting

- elementary algebra

- theorem proving

- program synthesis

- abductive reasoning

These tasks usually require structured manipulation of symbols. A classic symbolic system would solve them using explicit algorithms. A neural system tries to learn the behavior from examples.

Why do this?

First, it tests the limits of deep learning. If a neural model can learn logical or algebraic reasoning, that tells us something about what neural representations can capture.

Second, a trained neural module may be faster or more robust to noisy inputs than a purely symbolic procedure, especially when the symbolic problem is embedded in messy real-world data.

But this approach also has a risk. A neural model may imitate symbolic reasoning without truly preserving the guarantees of symbolic reasoning. For example, it may perform well on familiar examples but fail on longer formulas, deeper plans, or unusual compositions.

So the key challenge is systematic generalization: can the model apply learned symbolic behavior to structures that are larger or different from those seen during training?

2. Using Symbolic Knowledge to Improve Deep Learning

Another direction is to inject symbolic knowledge into neural systems.

This can include:

- knowledge graphs

- ontologies

- logical rules

- structured metadata

- domain constraints

- taxonomies

- background theories

Deep learning models usually learn from data alone. But in many domains, we already have useful knowledge. A medical model can use clinical guidelines. A robotics model can use physical constraints. A conversational agent can use a knowledge graph. A recommendation system can use structured user and item metadata.

Symbolic knowledge can help neural models in several ways:

- improve data efficiency

- improve out-of-distribution generalization

- enforce consistency

- reduce nonsensical outputs

- support zero-shot or few-shot learning

- add domain context that raw data alone may not provide

For example, a vision model may recognize objects in an image, while a knowledge graph can tell the system that a bicycle has wheels, a person can ride a bicycle, and a helmet is related to safety. This background structure can help the model reason beyond pixels.

The main difficulty is integration. Symbolic knowledge is often discrete, structured, and rule-like. Neural networks usually operate on continuous vectors. Neurosymbolic research tries to bridge that gap.

3. Explainability Through Background Knowledge

Explainability is one of the strongest motivations for neurosymbolic AI.

A black-box model may produce a correct prediction, but in high-stakes domains, correctness is not always enough. Users may need to know why the system made that prediction, whether the reasoning is acceptable, and whether the system relied on inappropriate features.

Symbolic knowledge can make explanations more meaningful.

Instead of explaining a prediction only through raw input features, a neurosymbolic system can connect the prediction to concepts, rules, or relationships.

For example:

- A medical model can explain a diagnosis using symptoms, risk factors, and clinical rules.

- A cybersecurity model can connect an alert to known attack patterns.

- A legal decision-support model can refer to rules, exceptions, and precedent-like structures.

- A visual reasoning model can explain that an object is likely a “cup” because it has a handle, an opening, and is located on a table.

This kind of explanation is closer to human reasoning than a heatmap alone. A heatmap may show where the model looked. Symbolic background knowledge can help explain what the model recognized and why that recognition mattered.

Still, explanation must be handled carefully. A symbolic explanation should be faithful to the model’s actual behavior, not merely a readable story attached after the prediction. Otherwise, the system may look more trustworthy than it really is.

4. Coupling Neural and Symbolic Components

The most direct neurosymbolic approach is to build hybrid systems where neural and symbolic components work together.

There are many ways to couple them.

A symbolic planner may call a neural perception module. A neural model may produce candidate symbols that are checked by a logic reasoner. A reinforcement-learning agent may use symbolic planning to reduce exploration. A language model may retrieve structured facts from a knowledge base before answering. A neural theorem prover may combine embeddings with logical rules.

This pattern is common in complex tasks because no single method is sufficient.

Consider a robot operating in a home. It needs neural perception to recognize objects, speech, gestures, and scenes. But it also needs symbolic reasoning to follow instructions, respect constraints, plan steps, and avoid unsafe actions.

The neural part answers questions like:

- What objects are present?

- What did the human say?

- What is the current scene state?

The symbolic part answers questions like:

- What actions are allowed?

- What sequence of steps satisfies the goal?

- Which constraints must not be violated?

- What explanation can be given for the chosen action?

The strength of coupled systems is flexibility. The weakness is engineering complexity. The interface between neural and symbolic modules must be carefully designed, because errors in one component can propagate into the other.

5. Tight Integration: Differentiable Logic and Neural Reasoning

Some neurosymbolic systems go beyond loose coupling. They try to make symbolic reasoning differentiable, so that neural and symbolic parts can be trained together.

Examples include:

- logic tensor networks

- differentiable theorem proving

- neural logic machines

- probabilistic logic combined with neural embeddings

- differentiable rule learning

- constrained neural optimization

The appeal is clear: if symbolic constraints can participate in gradient-based learning, then a model can learn from data while being guided by logic.

For example, a model can be trained not only to classify examples correctly, but also to satisfy rules such as:

if x is a parent of y, then y is a child of x

or:

an object cannot be both completely inside and completely outside the same container at the same time

These constraints can reduce impossible predictions and make learning more structured.

The challenge is that logic is crisp, while neural optimization is continuous and approximate. Making logic differentiable often requires relaxation, and relaxation can weaken the original symbolic guarantees.

6. Neurosymbolic AI for Planning and Reinforcement Learning

Planning and reinforcement learning are natural areas for neurosymbolic AI.

Pure reinforcement learning can require enormous exploration. Symbolic planning can reduce that burden by giving the agent structured options, action models, goals, and constraints.

For example, an agent may learn low-level control with neural networks while using symbolic planning for high-level decisions. In robotics, the neural part may learn how to grasp an object, while the symbolic planner decides that the object must first be located, then approached, then grasped, then placed.

This kind of decomposition improves sample efficiency and makes the policy easier to inspect.

It also connects with Neurosymbolic Reinforcement Learning: An Overview, where the same idea appears in more detail: learning and planning become more powerful when perception, symbolic abstraction, and decision-making are combined.

Why These Approaches Matter

Neurosymbolic AI matters because many real-world problems require more than pattern recognition.

A system may need to:

- detect objects from raw sensory data

- reason about relations between objects

- obey rules and constraints

- explain its decisions

- generalize to new combinations

- use prior domain knowledge

- plan over long horizons

- remain reliable when data is incomplete

Pure neural systems can struggle with these requirements. Pure symbolic systems can struggle with perception and uncertainty. Hybrid systems are an attempt to build something more practical.

Open Challenges

The field still has hard problems.

First, there is no single standard architecture. “Neurosymbolic AI” covers many different designs, from loose pipelines to tightly integrated differentiable logic.

Second, evaluation is difficult. A model may look neurosymbolic, but does it actually reason better, explain better, or generalize better? Benchmarks need to test compositionality, faithfulness, robustness, and data efficiency, not just accuracy.

Third, integration is technically hard. Symbolic systems use discrete structures. Neural systems use continuous representations. Making them communicate cleanly is still an active research problem.

Fourth, interpretability is not automatic. Adding symbols can improve explanation, but a complex hybrid system can still be difficult to understand if the neural and symbolic parts interact opaquely.

Takeaway

Neurosymbolic AI is best understood as a set of design strategies for combining learning and reasoning.

Some approaches train neural networks to perform symbolic tasks. Some inject symbolic knowledge into deep learning. Some use symbolic structures for explanation. Some build hybrid systems where neural perception and symbolic reasoning cooperate. Others try to make logic itself differentiable.

The promise is not that neurosymbolic AI will replace deep learning or classical AI. The promise is that many important problems need both: the ability to learn from data and the ability to reason with structure.

That combination is what makes neurosymbolic AI one of the most important directions for building more reliable, explainable, and general AI systems.

References

- Hitzler, P., Eberhart, A., Ebrahimi, M., Sarker, M. K., and Zhou, L. (2022). Neuro-symbolic approaches in artificial intelligence. National Science Review, 9(6), nwac035.

- Acharya, K., Raza, W., Dourado, C., Velasquez, A., and Song, H. H. (2023). Neurosymbolic reinforcement learning and planning: A survey. IEEE Transactions on Artificial Intelligence.