Trustworthy AI is not just about getting high benchmark scores. A model can be accurate and still be hard to inspect, unfair to certain groups, fragile under distribution shift, unsafe in deployment, or careless with private data.

The paper “Neuro-Symbolic methods for Trustworthy AI: a systematic review” by Cyprien Michel-Deletie and Md Kamruzzaman Sarker looks at this problem through a neuro-symbolic lens. It asks a practical question: how are recent neuro-symbolic methods actually being used to make AI systems more trustworthy?

The answer is both promising and uneven. Neuro-symbolic AI has strong potential for trustworthiness, especially interpretability. But the review also shows that most current work is concentrated on explanations, while fairness, privacy, and safety receive much less attention.

What Neuro-Symbolic AI Brings

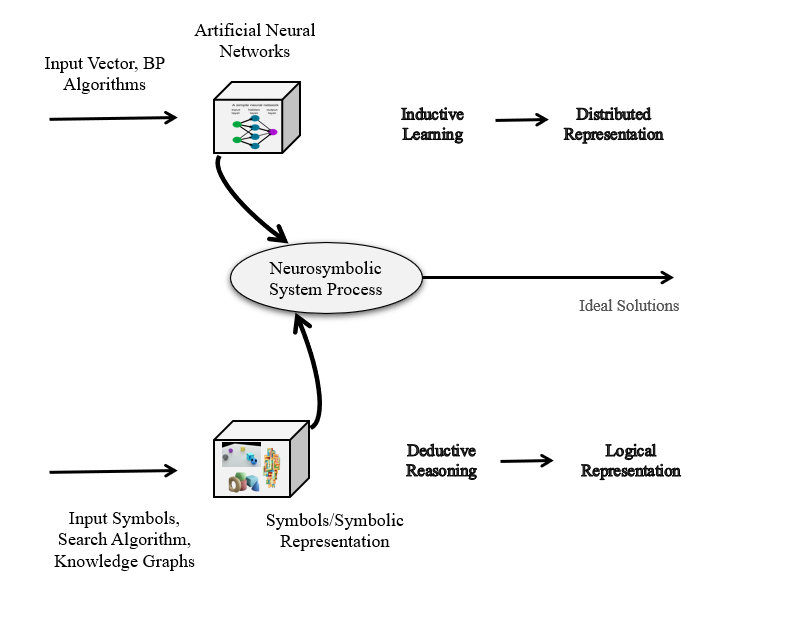

Neuro-symbolic AI combines two traditions that have often been treated separately.

Neural systems are strong at learning from raw data. They can recognize patterns in images, text, speech, and complex sensor streams. But they are often difficult to inspect, sensitive to adversarial inputs, and weak at explicit reasoning.

Symbolic systems work with rules, logic, graphs, programs, ontologies, and structured knowledge. They are easier to inspect and can support reasoning, but they often struggle with noisy data and large-scale perception.

Neuro-symbolic AI tries to use both strengths:

- neural learning for perception, pattern recognition, and representation learning

- symbolic reasoning for structure, explanation, constraints, and knowledge integration

That combination is why the field is relevant to trustworthiness. If a system can learn from data while also exposing symbolic structure, it may be easier to debug, explain, constrain, and audit.

What Trustworthiness Means

The paper treats trustworthiness as a broad property of AI systems. Performance matters, but it is only the starting point. A trustworthy AI system should also be understandable, fair, robust, private, and safe.

Interpretability means users can understand how a system works or why it made a decision. This is the most developed part of the trustworthy AI literature and also the most common focus of neuro-symbolic work.

Fairness means the system avoids discriminatory behavior, especially in decisions about people: hiring, lending, healthcare, criminal justice, education, and similar domains.

Privacy means the system protects sensitive training data and does not leak private information through model behavior or user interaction.

Robustness means the system continues to behave correctly under changes in data distribution, noisy inputs, or adversarial manipulation.

Safety means the system avoids harmful unintended behavior, especially when deployed in high-stakes or autonomous settings.

A useful way to summarize the paper is this: neuro-symbolic AI is naturally aligned with interpretability, but trustworthy AI requires more than interpretability.

Why Interpretability and Neuro-Symbolic AI Fit Together

Symbols are good explanation carriers. Humans often explain decisions using rules, categories, relations, causes, goals, and constraints. Those are symbolic objects.

For example:

- a decision tree explains through a sequence of tests

- a rule explains through conditions and a conclusion

- a knowledge graph explains through relationships between entities

- a logic proof explains through intermediate reasoning steps

- a program explains through executable structure

This makes neuro-symbolic AI attractive for explainability. Instead of only producing a heatmap or a feature-importance score, a neuro-symbolic system can sometimes produce an explanation closer to how people reason: “this follows because these conditions hold” or “this entity is connected to that entity through this relation.”

That does not automatically make every neuro-symbolic system trustworthy. A symbolic explanation can still be incomplete, misleading, or too complex. But symbolic structure gives researchers a useful handle for making explanations more inspectable.

What the Review Studied

The authors reviewed recent work from major AI venues between 2021 and May 2023. The venues included NeurIPS, AAAI, IJCAI, ICLR, ICML, NeSy, ACM FAccT, and KDD.

They searched for papers that combined neuro-symbolic methods with trustworthiness goals. A paper was included only when symbolic knowledge played an active role in the system, not merely when the final explanation happened to look symbolic.

That distinction matters. A model that prints a rule after making a prediction is not necessarily neuro-symbolic. For the review, the symbolic part had to contribute to the method’s trustworthiness.

The final set contained 54 papers.

The most important finding was the imbalance:

- almost all selected papers focused on interpretability

- only one focused mainly on fairness

- only one focused mainly on safety

- privacy was barely represented

This does not mean neuro-symbolic AI cannot help with fairness, privacy, or safety. It means the research community has not explored those directions nearly as much as interpretability.

Symbolic Structures Used in Trustworthy NeSy

The review separates neuro-symbolic methods by the kind of symbolic structure they use.

One large group is rule learning. These methods use neural components to learn symbolic rules, decision trees, or transparent classifiers. The symbolic output is itself part of the interpretability story.

Beyond rule learning, the paper identifies three broad families.

Logic structures use propositions, rules, constraints, or reasoning formulas. These are useful when the explanation needs to show why a conclusion follows from explicit conditions.

Graphs include knowledge graphs, scene graphs, proof graphs, Abstract Meaning Representation graphs, and other relational structures. Graphs are useful when trust depends on relationships: entities, facts, dependencies, causes, or evidence chains.

Other symbolic structures include symbolic object descriptions, action models, program-like representations, and symbolic languages. This category is diverse, but it shows that neuro-symbolic AI is not limited to formal logic.

The review found that logic, graphs, and other symbolic structures were all well represented. That is a healthy sign: trustworthy neuro-symbolic AI is not one method, but a family of design patterns.

Types of Interpretability

Because interpretability dominated the reviewed papers, the authors classified those papers along three common dimensions.

The first dimension is local versus global.

Local explanations explain one prediction. For example: why did the model reject this application?

Global explanations explain the system’s broader behavior. For example: what rules does the model generally follow?

There is also a middle ground: explanations for a subgroup or category of inputs.

The second dimension is ante-hoc versus post-hoc.

Ante-hoc methods are interpretable by design. Their internal structure is meant to be understandable from the start.

Post-hoc methods explain a model after it has already made a prediction. This can be useful, especially for powerful black-box models, but it carries a risk: the explanation may not faithfully represent the model’s actual reasoning.

The third dimension is model-specific versus model-agnostic.

Model-specific methods are tied to a particular architecture or learning method. Model-agnostic methods can be applied more broadly.

The review found a range of approaches across these dimensions. There was no single dominant pattern, which suggests that neuro-symbolic interpretability is still a diverse and developing area.

Where These Systems Are Applied

The reviewed papers covered many application areas.

Some worked with visual data: image classification, action recognition, visual relation detection, visual question answering, and video reasoning.

Some focused on natural language: fake news detection, question answering, text classification, medical dialogue, commonsense reasoning, and news recommendation.

Others dealt with graph tasks, reinforcement learning, adaptive management, time-series analysis, congestion control, program safety, and computer algebra.

This variety is important. It shows that neuro-symbolic methods are not tied to one narrow domain. They can support trustworthiness wherever a system benefits from combining learned representations with explicit structure.

The Missing Pieces: Fairness, Privacy, and Safety

The most useful part of the review is also the most critical: current neuro-symbolic trustworthiness research is too concentrated on interpretability.

Fairness is a natural area for neuro-symbolic work. Fairness constraints often involve explicit rules, protected attributes, counterfactual reasoning, or domain-specific norms. Symbolic constraints could help represent what a system is allowed or not allowed to do.

Privacy is less obvious, but still worth exploring. Symbolic abstractions might help separate sensitive information from task-relevant structure, or support policy-aware reasoning about data access. The review does not claim this is solved; it points out that the area is underexplored.

Safety is also promising. Many safety problems involve rules, preconditions, forbidden actions, causal constraints, and verification. These are symbolic ideas. A neuro-symbolic system could use neural perception while relying on symbolic constraints to prevent unsafe behavior.

The paper’s message is not that neuro-symbolic AI has already solved trustworthy AI. It is that the field has strong tools for interpretability, and those tools should be extended to the broader trustworthiness agenda.

A Caution About Explanations

The paper also highlights an important warning: explanations are not a silver bullet.

An explanation can increase user confidence without actually improving decision quality. Novices and experts may use explanations differently. Human-AI teams may become faster but not necessarily more accurate when explanations are added. Post-hoc explanations may be persuasive while failing to reflect the real model behavior.

This matters for neuro-symbolic AI. Symbolic explanations can look very convincing because they resemble human reasoning. But an explanation should still be evaluated for faithfulness, usefulness, and the risk of overtrust.

For trustworthy AI, the goal is not merely to produce explanations. The goal is to produce explanations that help people make better decisions about when to rely on the system and when to question it.

Open Challenges

The review points to several research gaps.

First, the field needs better terminology. Many papers use neuro-symbolic ideas without calling them neuro-symbolic, while others use the label in very broad ways. This makes comparison difficult.

Second, the community needs stronger evaluation standards. Interpretability is often self-assessed by researchers. A method may be described as explainable without enough evidence that users can actually understand it or use it appropriately.

Third, trustworthiness needs to move beyond interpretability. Fairness, privacy, safety, robustness, accountability, and auditability all deserve more neuro-symbolic research.

Finally, neuro-symbolic systems must balance power and transparency. Adding symbolic structure can help, but it can also make systems more complex if the neural and symbolic parts interact in ways users cannot inspect.

Takeaway

Neuro-symbolic AI is a strong candidate for building more trustworthy AI systems because it brings together learning and reasoning. Neural components help systems learn from messy data. Symbolic components help represent rules, relationships, constraints, and explanations.

But the systematic review shows that the field is still uneven. Most current work uses neuro-symbolic methods to improve interpretability. That is valuable, but trustworthiness is broader.

The next step is to apply neuro-symbolic ideas more seriously to fairness, privacy, safety, and robustness. A trustworthy system should not only explain itself. It should behave reliably, respect constraints, protect people, and make its reasoning open to inspection.

References

- Michel-Deletie, C., and Sarker, M. K. (2024). Neuro-Symbolic methods for Trustworthy AI: a systematic review. Neurosymbolic Artificial Intelligence, IOS Press.