Artificial intelligence has always had two competing instincts.

One instinct says intelligence should be built from rules, symbols, logic, and explicit knowledge. This is the symbolic AI tradition. It is good at representing facts, relationships, constraints, and reasoning steps.

The other instinct says intelligence should be learned from data, examples, patterns, and experience. This is the neural or connectionist tradition. It is good at perception, prediction, language, images, speech, and other messy real-world inputs.

Neurosymbolic AI is the attempt to bring these two instincts together.

The motivation is straightforward: modern deep learning is powerful, but it often struggles with reasoning, causality, interpretability, and common sense. Symbolic AI is interpretable and structured, but it can be brittle when the world is noisy, uncertain, or difficult to describe with hand-written rules.

The interesting question is not which side should win. The better question is:

Can we build systems that learn from data and reason with structure?

That is the promise of neurosymbolic AI.

Neural AI and Symbolic AI

Symbolic AI was one of the earliest approaches to artificial intelligence. It represents knowledge using symbols, rules, logic, ontologies, graphs, and structured relationships. A symbolic system might know that:

- every aircraft has operational constraints

- every constraint must be satisfied before a route is feasible

- if weather violates a safety threshold, the route should be rejected

This kind of representation is useful because the reasoning process can often be inspected. We can ask why a decision was made and trace it through rules or logical relationships.

Deep learning works differently. Instead of explicitly writing the rules, we train a model on data. A neural network may learn to classify images, predict demand, translate language, or forecast time series by adjusting millions or billions of parameters.

This is powerful because many real-world patterns are hard to write down as rules. But the learned representation is often opaque. A model may produce a correct prediction without giving a clear explanation of the reasoning behind it.

The two approaches have complementary strengths:

- Neural models are strong at perception, pattern recognition, and learning from raw data.

- Symbolic models are strong at reasoning, constraints, compositionality, and explicit knowledge.

Neurosymbolic AI tries to use both.

Why Neurosymbolic AI Matters

Deep learning has advanced rapidly, but several problems remain difficult:

- Common sense reasoning: Neural models can make mistakes that are obvious to humans because they lack grounded world knowledge.

- Causality: Correlation learned from data is not the same as understanding cause and effect.

- Data efficiency: Many neural models require large amounts of labeled data.

- Explainability: In safety-critical settings, a prediction without a reason is often not enough.

- Generalization: Models can fail when the test environment differs from the training distribution.

- Constraint satisfaction: Some decisions must obey hard rules, not just statistical preferences.

Neurosymbolic AI is attractive because it can add structure around learning. A neural model can process raw inputs, while symbolic components can represent domain knowledge, enforce constraints, or provide reasoning steps.

For example, in an autonomous driving system, a neural network may detect pedestrians, vehicles, signs, and lanes. A symbolic reasoning layer can then reason about traffic rules, right-of-way, safety constraints, and possible actions.

In a medical decision-support system, a neural model may identify patterns in scans or patient records. A symbolic layer can connect those patterns with clinical rules, treatment constraints, known risk factors, and explainable recommendations.

The goal is not to make neural networks disappear. The goal is to make learning systems more reliable, interpretable, and useful in complex domains.

What Is Neurosymbolic AI?

At a high level, neurosymbolic AI is a family of methods that combine:

- neural networks or other statistical learning models

- symbolic representations such as rules, logic, programs, knowledge graphs, or constraints

- mechanisms for reasoning, explanation, or structured decision-making

The combination can happen in many ways. Sometimes a neural model feeds symbols into a reasoning system. Sometimes symbolic knowledge is injected into a neural model. Sometimes the two components interact during training.

The common theme is that the system should be able to learn and reason.

Learning helps the system deal with noisy, high-dimensional data. Reasoning helps the system use structure, rules, and relationships that are difficult to learn purely from examples.

Six Ways to Combine Neural and Symbolic AI

Henry Kautz described several ways to think about neural-symbolic integration. The diagrams below summarize six broad patterns. The notation can look abstract at first, but the underlying ideas are approachable.

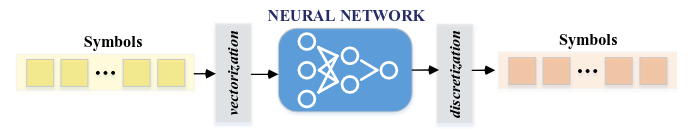

Symbolic Neuro Symbolic

In this pattern, symbolic inputs are converted into neural representations, processed by a neural model, and then converted back into symbolic outputs.

This is common in many modern AI systems. Text, tokens, labels, or structured categories are embedded into vectors, processed by a neural network, and then mapped back into words, labels, or symbolic actions.

The strength of this approach is flexibility. The weakness is that the symbolic reasoning may only exist at the input and output boundaries, while the internal process remains mostly neural and opaque.

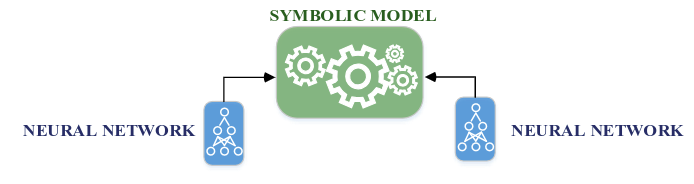

Symbolic[Neuro]

Here, a symbolic system remains in control, but it calls a neural component as a subroutine.

This is useful when a system needs both perception and explicit reasoning. A planning system might use a neural network to recognize objects in an image, then use symbolic rules to decide what actions are allowed.

One way to think about this pattern is: the neural model answers “what is present?”, and the symbolic system answers “what should be done with that information?”

Neuro | Symbolic

This pattern is a pipeline. A neural model processes raw input and produces a symbolic representation. A symbolic reasoner then operates on that representation.

The Neuro-Symbolic Concept Learner is a good example of this style. A neural perception module extracts objects and attributes from images, and a symbolic reasoning module answers questions using those extracted concepts.

This is attractive because it separates perception from reasoning. But it also creates a dependency: if the neural module extracts the wrong symbols, the symbolic reasoner may confidently reason over incorrect information.

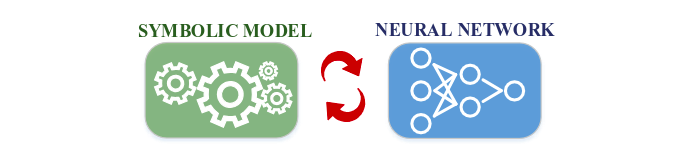

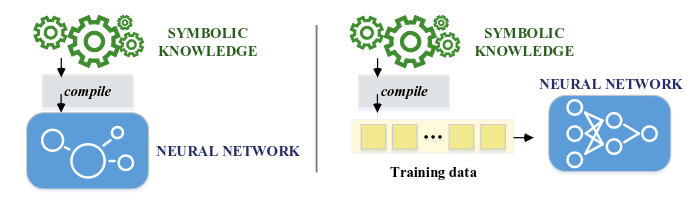

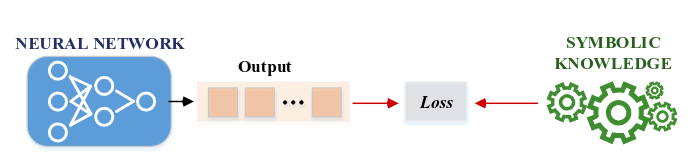

Neuro: Symbolic -> Neuro

In this pattern, symbolic knowledge is used to guide or train a neural model. Rules, programs, equations, or logical constraints become part of the learning process.

This is useful when we already know something about the domain. For example, physical laws, safety rules, grammar constraints, or mathematical identities can be used to regularize training or restrict the space of possible solutions.

The benefit is data efficiency. If a model does not have to rediscover known rules from scratch, it can learn more effectively from fewer examples.

Neuro_{Symbolic}

This approach encodes symbolic statements directly into neural structures.

Logic Tensor Networks are an example of this family. They combine neural learning with differentiable logical constraints, allowing a system to learn while still respecting symbolic relationships.

This direction is appealing because it tries to make reasoning differentiable. The challenge is that translating rich symbolic reasoning into neural computation can be technically difficult and may not preserve all the clarity of explicit logic.

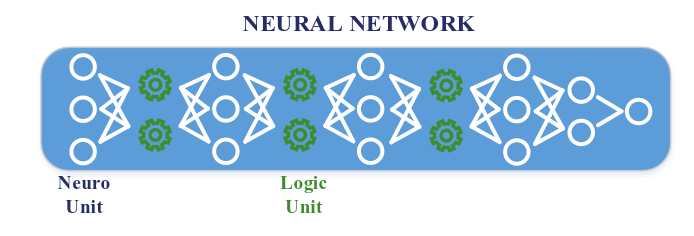

Neuro[Symbolic]

In this pattern, symbolic reasoning is embedded inside a neural system.

This is one of the most ambitious forms of neurosymbolic AI. The goal is not just to attach a reasoner to a neural model, but to build systems where learning and reasoning interact deeply.

This kind of integration is difficult, but it is important for tasks that require perception, abstraction, planning, and multi-step inference.

How to Think About Integration

Another useful way to understand neurosymbolic AI is to ask three questions.

First, how tightly are the neural and symbolic parts connected? In a hybrid system, the components may run separately and exchange information. In an integrated system, symbolic knowledge may be embedded directly into the neural architecture or training objective.

Second, what kind of symbolic language is being used? Some systems use general symbolic structures such as graphs or programs. Others use formal logic, which can support more precise reasoning but may be harder to scale.

Third, what is the symbolic component doing? It may help the model learn, explain, reason, constrain outputs, extract rules, or represent knowledge.

These distinctions matter because “neurosymbolic AI” is not a single algorithm. It is a broad design space.

Where Neurosymbolic AI Is Useful

Neurosymbolic AI is most useful when a task requires both pattern recognition and structured reasoning.

Healthcare and Scientific Discovery

Medical AI often needs to combine data-driven prediction with domain knowledge. A neural model can detect patterns in images, signals, or patient records. Symbolic knowledge can represent clinical guidelines, biological relationships, causal assumptions, and safety constraints.

This is especially useful in areas such as drug repurposing, diagnosis support, disease progression modeling, and rare disease analysis, where pure black-box prediction is not enough.

Cybersecurity and Safety-Critical Systems

Cybersecurity systems need to recognize patterns of attack, but they also need rule-based reasoning about permissions, vulnerabilities, behaviors, and constraints.

A neurosymbolic approach can combine learned anomaly detection with explicit logic about what actions are allowed, suspicious, or unsafe. This is valuable because security decisions often require explanation and traceability.

Robotics and Autonomous Systems

Robots and autonomous vehicles need perception, but perception alone is not enough. They must reason about objects, actions, affordances, goals, and constraints.

A robot may use neural perception to detect a cup, a table, or a human hand. It may then use symbolic reasoning to decide whether the cup can be grasped, whether the action is safe, and what sequence of steps should follow.

Natural Language and Dialogue

Language models are powerful, but they can still struggle with consistency, factual grounding, and multi-step reasoning. Symbolic structures such as knowledge graphs, programs, and logical constraints can help ground language in explicit relationships.

In dialogue systems, a neural model may generate candidate actions or responses, while a symbolic module checks whether they satisfy task constraints or domain rules.

Recommender Systems and Decision Support

Recommendation is not only about predicting what a user may click. In many settings, recommendations must respect constraints, context, risk, freshness, fairness, or business rules.

Neurosymbolic systems can combine learned user preferences with explicit reasoning about context and constraints. This is useful in decision-support settings where the system must justify why a recommendation was made.

Smart Cities and Transportation

Urban systems involve data, infrastructure, policies, and constraints. Neural models can forecast demand, traffic, or mobility patterns. Symbolic reasoning can represent regulations, safety rules, network constraints, and planning objectives.

This combination is relevant for smart cities, transportation planning, disaster response, and Advanced Air Mobility, where predictions must be connected to operational decisions.

Benefits

The main benefits of neurosymbolic AI are:

- Better explainability: Symbolic components can expose reasoning steps or constraints.

- Improved data efficiency: Prior knowledge can reduce the amount of data needed.

- Stronger generalization: Structured knowledge can help models transfer beyond observed examples.

- Constraint satisfaction: Hard rules can prevent invalid or unsafe outputs.

- Causal reasoning: Symbolic models can represent relationships beyond correlation.

- Compositionality: Systems can combine smaller concepts into larger reasoning chains.

These benefits are especially important in domains where mistakes are costly and decisions must be justified.

Limitations

Neurosymbolic AI is promising, but it is not a magic solution.

The first challenge is integration. Neural networks and symbolic systems have different assumptions, representations, and optimization methods. Making them work together cleanly is hard.

The second challenge is scalability. Symbolic reasoning can become expensive as the number of facts, rules, and possible relationships grows.

The third challenge is knowledge engineering. Many symbolic systems still require carefully designed rules or ontologies, and building them can be labor-intensive.

The fourth challenge is uncertainty. Real-world data is noisy, incomplete, and ambiguous. Symbolic systems must be able to reason under uncertainty, not only with perfectly clean facts.

Finally, there is still no single dominant architecture for neurosymbolic AI. The field contains many promising directions, but the best design depends heavily on the task.

Future Directions

The future of neurosymbolic AI will likely move in several directions:

- learning symbolic rules from data

- using knowledge graphs with neural models

- making logical reasoning differentiable

- combining language models with external tools and formal reasoning

- building systems that can explain decisions in human-understandable terms

- enforcing safety and operational constraints in real-time AI systems

Large language models have also renewed interest in this area. They are strong at language and pattern completion, but they still benefit from retrieval, tools, structured knowledge, verification, and planning. In that sense, many modern AI systems are moving toward neurosymbolic design even when they do not use that label explicitly.

Takeaway

Neurosymbolic AI is important because it addresses a central weakness of modern machine learning: prediction alone is not the same as understanding.

Neural networks help machines learn from experience. Symbolic reasoning helps machines use structure, rules, and relationships. Bringing them together can make AI systems more interpretable, data-efficient, reliable, and useful in domains where decisions need to be explained and constrained.

The core idea is simple:

Learning gives AI perception. Reasoning gives AI structure. Neurosymbolic AI tries to give systems both.

References

- Henry Kautz. The Third AI Summer. AI Magazine, 2022.

- Garcez, A. d., & Lamb, L. C. Neurosymbolic AI: The 3rd Wave. arXiv:2012.05876, 2020.

- Bouneffouf, D., & Aggarwal, C. C. Survey on Applications of Neurosymbolic Artificial Intelligence. arXiv:2209.12618, 2022.

- Badreddine, S., d’Avila Garcez, A., Serafini, L., & Spranger, M. Logic Tensor Networks. Artificial Intelligence, 2022.

- Mao, J., Gan, C., Kohli, P., Tenenbaum, J. B., & Wu, J. The Neuro-Symbolic Concept Learner. arXiv:1904.12584, 2019.

- Wang, W., Yang, Y., & Wu, F. Towards Data-and Knowledge-Driven Artificial Intelligence: A Survey on Neuro-Symbolic Computing. arXiv:2210.15889, 2022.