Neurosymbolic AI depends on a simple but deep question:

How should knowledge be represented, and where should it enter the neural system?

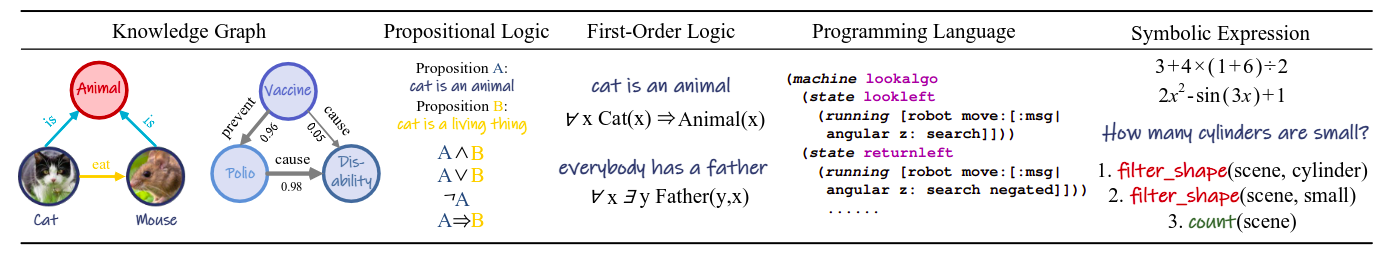

The first part is about knowledge representation. Do we represent knowledge as a graph, a logical rule, a program, or a symbolic expression?

The second part is about knowledge embedding. Once the knowledge is represented, do we put it into the data, the learned representation, the architecture, or the inference process?

These two choices shape what a neuro-symbolic system can learn, how it reasons, and how easy it is to inspect.

This article is based on the survey “Towards Data-and Knowledge-Driven Artificial Intelligence: A Survey on Neuro-Symbolic Computing” by Wang, Yang, and Wu. The useful idea from the survey is that neuro-symbolic systems can be understood along two axes: the form of symbolic knowledge and the place where that knowledge is embedded.

Why Knowledge Representation Matters

Neural networks usually operate on vectors. Symbolic knowledge usually comes as structured objects: entities, relations, rules, programs, constraints, or expressions.

To combine them, we need a bridge.

That bridge matters because different representations support different kinds of reasoning. A knowledge graph is good for relational facts. Logic is good for rules and constraints. Programs are good for executable procedures. Symbolic expressions are good for algebra, equations, and structured generation.

Choosing the wrong representation can make a problem harder than it needs to be. Choosing the right one can make learning more efficient, explanations clearer, and predictions more consistent.

1. Knowledge Graphs

Knowledge graphs represent entities and relationships as a graph.

A common format is the triple:

(Subject, Predicate, Object)

For example:

(Paris, capital_of, France)(person, rides, bicycle)(tumor, has_property, malignant)(aircraft, must_satisfy, safety_constraint)

The subject and object are entities. The predicate is the relation between them. In graph form, entities become nodes and relations become labeled edges.

Knowledge graphs are widely used in neuro-symbolic AI because they naturally represent structure. They are useful in natural language processing, recommendation systems, visual reasoning, question answering, medical AI, and robotics.

In computer vision, graphs can represent relationships between objects in a scene: a cup is on a table, a person is holding a phone, a car is behind a bicycle. In NLP, graphs can represent factual knowledge or semantic relations. In recommendation, graphs can connect users, items, categories, and preferences.

The strength of knowledge graphs is relational reasoning. The weakness is that graph construction and maintenance can be difficult. A graph may be incomplete, noisy, biased, or too sparse for the task.

2. Propositional Logic

Propositional logic represents knowledge with statements that are either true or false.

For example:

Rain -> WetRoad

This means: if it is raining, then the road is wet.

Propositional logic uses operators such as:

ANDORNOTIMPLIES

It is simple, clean, and relatively easy to connect with neural systems. Early neuro-symbolic systems often used propositional logic because it avoids some of the complexity of richer logical languages.

The limitation is expressiveness. Propositional logic treats statements as whole units. It cannot easily express relationships between objects, variables, or quantifiers.

For example, propositional logic can say:

SocratesIsHuman -> SocratesIsMortal

But it does not naturally express the general rule:

For every x, if x is human, then x is mortal.

That requires first-order logic.

3. First-Order Logic

First-order logic is more expressive because it introduces variables, predicates, and quantifiers.

For example:

forall x: Human(x) -> Mortal(x)

This rule applies to any object x. That makes first-order logic powerful for representing general knowledge.

It can express:

- relationships between objects

- universal rules

- existential claims

- nested structures

- constraints over variables

This is useful for neuro-symbolic AI because many real-world domains are relational. Medical reasoning, legal reasoning, planning, knowledge graphs, and scientific reasoning often need variables and rules that apply across many entities.

The challenge is computational. Full first-order logic is hard to embed into finite neural systems. Some approaches use restricted fragments such as Datalog. Others soften logic with fuzzy logic, compile clauses into differentiable objectives, use Markov logic networks, or combine logic programming with neural components.

The trade-off is clear: first-order logic gives more expressive power, but integration with deep learning becomes harder.

4. Programming Languages

Some neuro-symbolic systems represent knowledge as programs.

Programs are symbolic structures with syntax and semantics. They are not just descriptions; they can be executed.

Examples include:

- Prolog programs

- Datalog rules

- action languages

- domain-specific languages

- generated code

- symbolic programs for reasoning or planning

Program-based representations are useful when the task requires procedures. For example, a model may need to generate a program that solves a math problem, executes a query, controls an agent, or transforms an input structure.

This is especially important in program synthesis, semantic parsing, robotics, and planning. A neural model may propose a candidate program, while a symbolic executor checks whether it runs correctly.

The advantage is precision. If the program is valid and executable, the reasoning can often be inspected step by step.

The limitation is search. The space of possible programs can be huge, and small syntax errors can make a program invalid. Neural guidance can help search, but correctness still matters.

5. Symbolic Expressions

Symbolic expressions cover structured symbolic objects that do not fit neatly into the previous categories.

Examples include:

- algebraic expressions

- mathematical equations

- chemical expressions

- symbolic sequences

- syntax trees

- logical formulas

- operator-based compositions

These are common in scientific machine learning and symbolic regression. A model may learn an equation that explains physical behavior, generate a symbolic formula, or build a structured expression from smaller operators.

Symbolic expressions are useful because they can be compact and interpretable. An equation such as F = ma is not just a prediction rule; it is a reusable structure that carries meaning.

In neuro-symbolic systems, symbolic expressions are often represented as trees or graphs. Neural models can generate or score candidate expressions, while symbolic constraints check syntax, semantics, or physical validity.

Knowledge Embedding: Where Does the Symbolic Knowledge Go?

After choosing a representation, the next question is where to embed that knowledge in the neural pipeline.

The survey describes four broad locations:

- data

- sub-symbolic representation

- network architecture

- neural inference

Each location gives a different kind of neuro-symbolic system.

1. Knowledge Embedded in Data

The simplest strategy is to encode symbolic knowledge into the input data.

For example:

- represent a molecule as a graph

- represent a formula as a syntax tree

- represent a program as a token sequence

- represent a scene as objects and relations

- represent a knowledge graph as triples

This approach is attractive because it can use existing neural architectures. Sequence models can process symbolic strings. Graph neural networks can process graph-structured data. Tree-based encoders can process expression trees.

The advantage is engineering simplicity. The model does not need a custom reasoning engine.

The limitation is that the knowledge may only be implicit. The model receives structured data, but it may not obey the underlying symbolic rules. It can still generate invalid formulas, impossible molecules, inconsistent facts, or logically wrong conclusions unless additional constraints are added.

2. Knowledge Embedded in Sub-Symbolic Representations

Another strategy is to encode symbolic knowledge into learned vector representations.

This is often done by designing training objectives that reward consistency with symbolic knowledge. For example, a model can learn embeddings of entities and relations so that known facts are close together in vector space. Logic rules can also become soft constraints in the loss function.

This approach is common in knowledge graph embeddings and differentiable logic.

The advantage is that the neural model can stay mostly unchanged. Symbolic knowledge influences the representation through the objective function.

The weakness is that distributed representations are hard to inspect. A vector may encode symbolic knowledge, but it is not always clear where or how. There is also no guarantee that the model will always produce outputs that strictly satisfy the symbolic rules.

This is a recurring neuro-symbolic trade-off: soft embeddings are flexible, but they can weaken hard symbolic guarantees.

3. Knowledge Embedded in Network Architecture

Sometimes symbolic structure is built directly into the architecture.

Graph neural networks are a common example. If the knowledge is relational, a GNN can process nodes and edges in a way that reflects the structure of the graph.

Other examples include:

- neural modules aligned with grammar rules

- architectures that mirror compositional structure

- layers designed around physical constraints

- attention mechanisms over symbolic relations

- networks that explicitly model object-part relationships

The advantage is that the architecture has an inductive bias. It pushes the model toward solutions that respect the structure of the domain.

The downside is engineering cost. Designing the right architecture requires domain understanding and careful modeling choices. If the symbolic structure is wrong or incomplete, the architecture may constrain the model in harmful ways.

4. Knowledge Embedded in Neural Inference

The most explicit strategy is to inject symbolic knowledge during inference.

Here, symbolic constraints are used while the model is making predictions. The system may search only valid outputs, reject predictions that violate rules, or use an iterative reasoning process to enforce consistency.

Examples include:

- constrained decoding

- logic-guided inference

- semantic parsing with grammar constraints

- molecule generation with validity checks

- prediction adjusted by symbolic rules

- matrix-based implementations of logical reasoning

This approach can produce syntactically and semantically valid outputs because the symbolic knowledge actively shapes the final prediction.

The trade-off is computational complexity. Inference may become slower, and the system must carefully handle conflicts between neural scores and symbolic constraints.

How to Choose the Right Representation

There is no universally best option.

Use a knowledge graph when the task depends on entities and relations. Use propositional logic when the rules are simple and finite. Use first-order logic when variables and general rules matter. Use programs when reasoning must be executable. Use symbolic expressions when the target is formula-like, compositional, or mathematical.

Then decide where the knowledge should live.

If you want simplicity, encode it in the data. If you want soft guidance, put it in the representation or loss. If you want structural bias, build it into the architecture. If you need hard validity, enforce it during inference.

The best neuro-symbolic systems often combine several of these choices.

Why This Matters

Knowledge representation and embedding are not just implementation details. They determine the system’s reasoning style.

A model using knowledge graph embeddings will behave differently from a model using logic constraints during inference. A system that learns symbolic expressions will be evaluated differently from a system that stores knowledge in a program. Each choice affects interpretability, generalization, correctness, and trustworthiness.

This is why neuro-symbolic AI is hard but promising. It asks us to design not only models, but also the form of knowledge those models can use.

Takeaway

Neuro-symbolic AI starts with a design decision: what kind of knowledge are we using, and how will the neural model access it?

Knowledge can be represented as graphs, logic, programs, or symbolic expressions. It can be embedded in data, vector representations, architectures, or inference procedures.

The right combination depends on the task. But the principle is stable: neural learning becomes more powerful when it can use explicit structure, and symbolic reasoning becomes more practical when it can interact with learned representations.

That bridge between data and knowledge is the heart of neuro-symbolic AI.

References

- Wang, W., Yang, Y., and Wu, F. (2022). Towards Data-and Knowledge-Driven Artificial Intelligence: A Survey on Neuro-Symbolic Computing. arXiv:2210.15889.