Explainable AI and neurosymbolic AI are often mentioned together because both are connected to trust, reasoning, and human understanding. But they are not the same thing.

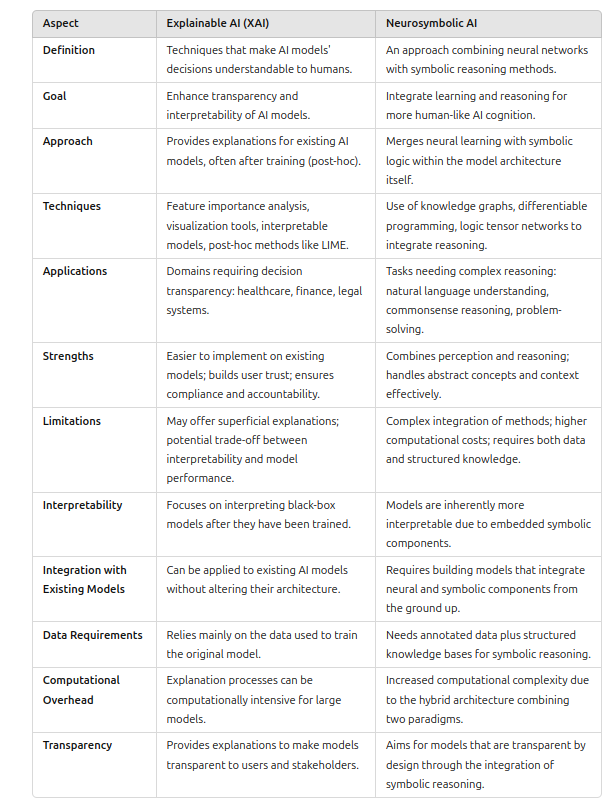

Explainable AI, or XAI, focuses on making model decisions understandable to humans. Neurosymbolic AI focuses on combining neural learning with symbolic reasoning. One is primarily about explanation. The other is primarily about architecture and reasoning.

They overlap, but they solve different parts of the trustworthy AI problem.

What Explainable AI Tries to Do

Explainable AI asks: why did the model make this decision?

A model may classify a loan applicant as high risk, flag a medical image, recommend a route, or reject a transaction. XAI methods try to make that decision more understandable by showing which features mattered, which examples were similar, what rule-like pattern was followed, or how the prediction changes under perturbations.

Common XAI techniques include feature importance, saliency maps, counterfactual explanations, partial dependence plots, LIME, SHAP, attention visualization, inherently interpretable models, and post-hoc explanation tools.

XAI is especially important when humans must audit, trust, contest, or act on model outputs.

What Neurosymbolic AI Tries to Do

Neurosymbolic AI asks: how can a system learn from data while also reasoning with explicit knowledge?

Neural networks are strong at perception, pattern recognition, and learning from large datasets. Symbolic AI is strong at representing rules, logic, constraints, concepts, and structured relationships. Neurosymbolic AI tries to combine these strengths.

Examples include neural models guided by logic constraints, knowledge graphs connected to language models, differentiable reasoning systems, symbolic planners supported by neural perception, and hybrid systems that use learned representations plus explicit rules.

The goal is not only to explain a trained model. The goal is to build systems that can reason more reliably in the first place.

The Key Difference

The simplest distinction is this:

- XAI explains what an AI system did.

- Neurosymbolic AI changes how the AI system thinks or reasons.

XAI can be applied after a model is trained. For example, SHAP can explain the predictions of a gradient boosting model or neural network without changing the model itself.

Neurosymbolic AI usually affects the model design. It may include symbolic rules, knowledge bases, constraints, programs, or reasoning modules inside the system.

Where They Overlap

Neurosymbolic AI can make explainability easier because symbolic components are often more inspectable than hidden neural activations. If part of the system uses explicit rules or a knowledge graph, a human may be able to inspect the reasoning path.

But neurosymbolic AI is not automatically explainable. A hybrid system can still be complex, and its neural components may remain opaque.

Likewise, XAI is not automatically neurosymbolic. A saliency map or SHAP explanation may explain a neural model without adding any symbolic reasoning to the system.

Strengths of XAI

XAI is practical because it can often be added to existing systems. Organizations can use explanation tools to audit models, communicate with users, diagnose failures, and support compliance.

XAI is especially useful for tabular models, computer vision models, risk scoring, fraud detection, healthcare decision support, and regulated domains where users need reasons.

Its limitation is that post-hoc explanations may be incomplete or misleading. An explanation can approximate model behavior without revealing the true internal cause of a decision.

Strengths of Neurosymbolic AI

Neurosymbolic AI is powerful when a task requires both learning and reasoning.

A self-driving system may need neural perception to identify objects and symbolic rules to obey traffic laws. A medical AI may need neural pattern recognition plus clinical knowledge. A planning system may need learned predictions plus logical constraints.

Its limitation is engineering complexity. Combining neural and symbolic components is difficult. The system must align representations, handle uncertainty, scale efficiently, and remain robust when symbolic knowledge is incomplete or wrong.

When to Use Which

Use XAI when the main problem is understanding, auditing, or communicating decisions from a model.

Use neurosymbolic AI when the main problem requires explicit reasoning, rules, constraints, structured knowledge, or compositional generalization.

Use both when the domain is high-stakes and reasoning-heavy. For example, aviation, healthcare, law, finance, robotics, and public safety may need systems that reason with rules and also explain their decisions.

Final Thoughts

Explainable AI and neurosymbolic AI are complementary. XAI helps humans understand AI behavior. Neurosymbolic AI helps build systems that combine learning with structured reasoning.

The distinction matters because adding an explanation tool does not necessarily make a system reason better, and adding symbolic reasoning does not automatically make every decision easy to explain.

Trustworthy AI will likely need both: models that can be inspected after they act, and architectures that reason more transparently before they act.

References

- DARPA, Explainable Artificial Intelligence. https://www.darpa.mil/research/programs/explainable-artificial-intelligence

- Gunning, D. and Aha, D., 2019. DARPA’s Explainable Artificial Intelligence Program. AI Magazine, 40(2), pp. 44-58. https://doi.org/10.1609/aimag.v40i2.2850

- Garcez, A.d., Gori, M., Lamb, L.C., Serafini, L., Spranger, M. and Tran, S.N., 2019. Neural-Symbolic Computing: An Effective Methodology for Principled Integration of Machine Learning and Reasoning. arXiv:1905.06088. https://arxiv.org/abs/1905.06088